Protocol Specification

Mesh Memory Protocol (MMP) v1.0

A Mesh Protocol for Collective Intelligence

Canonical: meshcognition.org/spec/mmp · arXiv: 2604.19540

Overview

Protocol Specification

Mesh Memory Protocol (MMP)

A Mesh Protocol for Collective Intelligence

| Version | 1.0 |

| Status | Published |

| Date | 27 April 2026 |

| Author | Hongwei Xu <[email protected]> |

| Organisation | SYM.BOT |

| Canonical URL | https://meshcognition.org/spec/mmp |

| Licence | CC BY 4.0 (specification text); Apache 2.0 (reference implementations) |

Introduction

Multi-agent LLM systems in production coordinate cognitive work on shared tasks spanning hours, days, and weeks — generator/quality/auditor pipelines running for days; research investigations spanning weeks across session restarts; a coding agent, a music agent, and a fitness agent serving the same user where no single agent connects “commits slowing” + “tracks skipped” + “3 hours without movement” into “the user is fatigued.” That insight requires structured collective intelligence — and the semantic-integration layer of agent communication is, today, unaddressed.

Existing protocols at lower layers standardize tool access and task delegation between agents. What each receiver does with incoming observations from a peer — per-field admission, signal-level lineage, filtering at acceptance time — is the missing layer. The Mesh Memory Protocol specifies that layer through four composable primitives: CAT7, a fixed seven-field schema for every Cognitive Memory Block; SVAF, per-field admission against the receiver’s role-indexed anchors; content-hash lineage, so every claim is traceable to its source observation; and remix, where receivers store only their own evaluated understanding of accepted blocks, never raw peer signals.

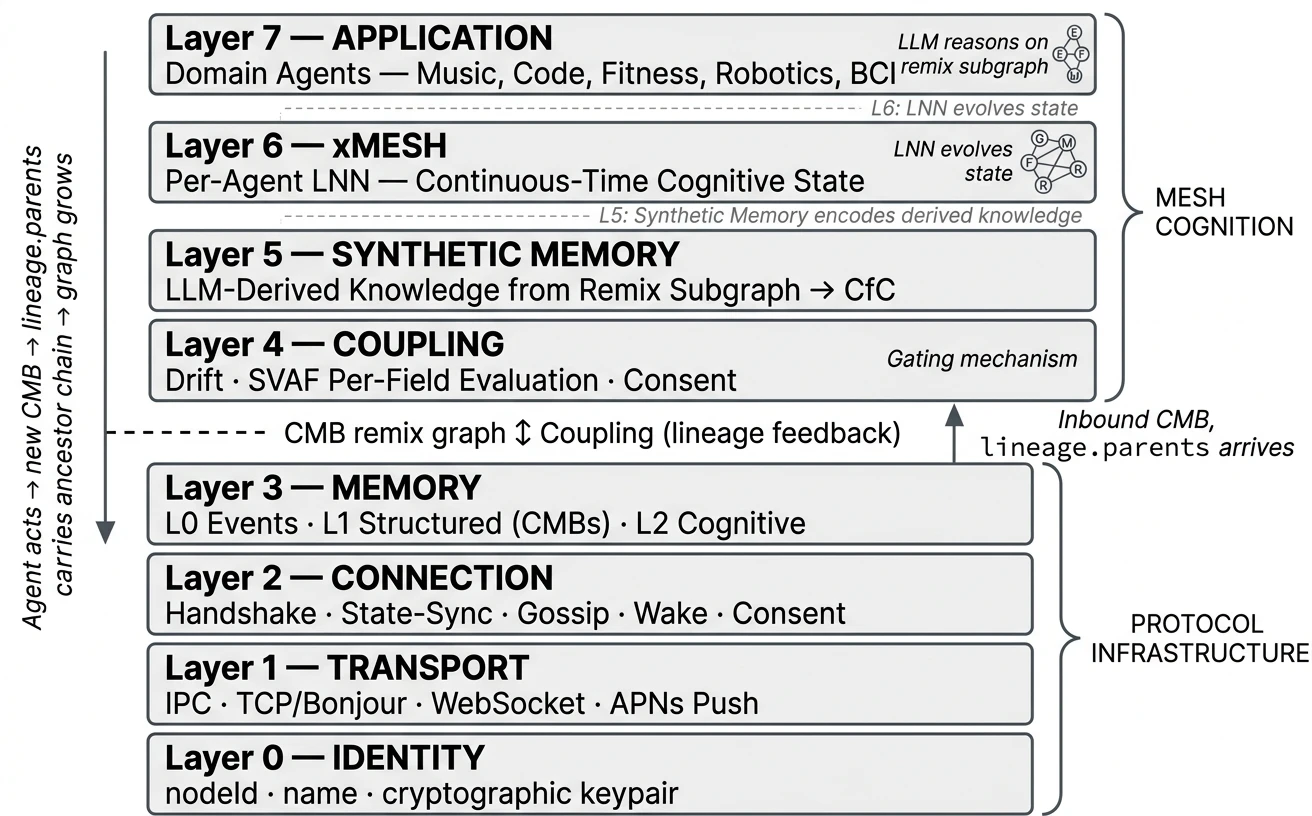

The problem is semantic, not transport. Hidden state never crosses the wire — each agent’s learned cognition stays sovereign on its own device; only Cognitive Memory Blocks (CMBs) propagate. Receiver-autonomous admission lets the mesh grow without re-introducing a master — same reason TCP/IP beat circuit-switching. MMP defines transport over TCP on local networks and WebSocket for internet relay, with length-prefixed JSON as the canonical wire format. Discovery uses DNS-SD (Bonjour) with zero configuration. The protocol is specified across 8 layers — from identity and transport (Layers 0–3), through cognitive coupling via SVAF (Layer 4), to synthetic memory and per-agent neural networks (Layers 5–7). Together, the upper layers form Mesh Cognition: a closed loop where agents reason on the growing remix graph of immutable Cognitive Memory Blocks.

Status of This Document

This is a published specification (current version 1.0). It reflects the protocol as implemented in the SYM Node.js and SYM Swift full-stack reference implementations, plus the mesh-cognition Python coupling kernel (Layers 4 + 6). The specification is versioned. Breaking changes increment the minor version; non-breaking additions increment the patch version.

Feedback and errata: [email protected] or github.com/sym-bot/sym/issues.

Implementations

| Language | Project | Maintainer | Scope |

|---|---|---|---|

| Node.js / TypeScript | sym-bot/sym | SYM.BOT | Reference implementation. Full protocol surface (Layers 0–7). |

| Swift | sym-bot/sym-swift | SYM.BOT | Reference implementation. macOS / iOS. Full protocol surface. |

| Python | sym-bot/mesh-cognition | SYM.BOT | Coupling kernel only. Layer 4 (per-field admission) and Layer 6 (state blending) for CfC neural networks. Pure Python, zero external dependencies. pypi. |

Change Log

| Version | Date | Changes |

|---|---|---|

| 1.0 | 2026-04-27 | Public-stable-API release. Marks the v0.2.x development cadence as complete and the protocol surface as production-stable. Contracts unchanged from 0.2.3; v0.2.x → v1.0 is a maturity declaration, not a breaking change. Note: arXiv:2604.19540 cites v0.2.x as the version implemented at paper-publication time; v1.0 covers the same contracts. |

| 0.2.3 | 2026-04-17 | Section 13.9 — Compact Channel Best Practices: CMB envelope header convention (RECOMMENDED) for structured message headers with signal keywords and focus tags. Lazy-load channel pattern (RECOMMENDED) for MCP server implementations: compact header push with on-demand full-content retrieval via sym_fetch, reducing mesh-traffic context consumption by ~75%. Token-count hint RECOMMENDED. Rolling message store with RECOMMENDED default of 200 messages. Signal-keyword priority table (informational): HALT > DIRECTIVE > RESULT > ACK. |

| 0.2.2 | 2026-04-06 | Section 11 — Feedback Modulation: how collective intelligence becomes self-correcting. Validator-authority CMBs with per-field reasoning modulate SVAF coupling weights and CfC temporal adaptation through the existing mesh cognition loop. Neuroscience-grounded: dopaminergic prediction error model with per-field direction and τ-modulated adaptation rate. Directive feedback for standalone domain knowledge injection. Validator-origin anchor weight 2.0 with role-grant verification. CfC state persistence across restarts. ABNF wire format grammar. CMB forward compatibility. Multi-relay failover. All cognitive content MUST use cmb frames. |

| 0.2.1 | 2026-04-02 | Node model: every autonomous agent MUST be a full peer node with own identity, coupling engine, and memory store. SVAF band-pass evaluation: four-class model (redundant/aligned/guarded/rejected) with per-field redundancy detection. CMB lifecycle: observed/remixed/validated/canonical/archived with anchor weight progression. Node lifecycle roles (observer/validator/anchor) with identity-bound validation authority and earned role progression. Validation authority for CMB lifecycle transitions bound to cryptographic node identity, not content. Semantic encoder SHOULD for SVAF drift computation. Handshake adds version, extensions, and lifecycleRole fields. Error frame type. Role-grant frame type. |

| 0.2.0 | 2026-03-27 | Formal specification published. 8-layer architecture. CAT7 CMB schema with lineage (parents + ancestors). SVAF per-field evaluation. Wire format normatively specified. Error frame. Frame type registry. Extension mechanism. JSON Schema. Connection state machine. Wire examples. |

| 0.1.0 | 2025-08-01 | Initial protocol design (Consenix Labs Ltd). 4-layer architecture. Scalar drift evaluation. |

Licence

This specification is published under the Creative Commons Attribution 4.0 International Licence (CC BY 4.0). You may share, adapt, and build upon this specification for any purpose, including commercial use, provided you give appropriate credit.

The reference implementations are published under the Apache Licence 2.0.

SYM and SYM.BOT are trademarks of SYM.BOT. The Mesh Memory Protocol is published under CC-BY-4.0; the term "Mesh Cognition" is intentionally unmarked — the open-protocol category is free vocabulary.

© 2026 SYM.BOT. Specification text licenced under CC BY 4.0. Reference implementations licenced under Apache 2.0.

1. Conventions

1. Conventions and Terminology

The key words “MUST”, “MUST NOT”, “REQUIRED”, “SHALL”, “SHOULD”, “SHOULD NOT”, “RECOMMENDED”, “MAY”, and “OPTIONAL” in this document are to be interpreted as described in RFC 2119.

| Term | Definition |

|---|---|

| Node | A participant in the mesh. Every node has a unique identity, its own coupling engine, and its own memory store. Cognitive nodes run their own LNN; relay nodes forward frames without cognitive processing. |

| Peer | Another node that this node has an active transport connection with and has completed a handshake. |

| Frame | A single protocol message: a length-prefixed JSON object sent over a transport connection. |

| CMB | Cognitive Memory Block — a structured memory unit with 7 typed semantic fields (CAT7 schema). See Section 8. |

| Drift | A scalar measure of cognitive distance between two nodes or between a signal and local state. Range [0, 1]. |

| Coupling | The process by which a node evaluates incoming signals (SVAF per-field evaluation) and blends its local cognitive state with peer state, weighted by drift and confidence. |

| SVAF | Symbolic-Vector Attention Fusion — per-field content-level evaluation of incoming memory signals. See Section 9. |

| Synthetic Memory | Layer 5 — derived knowledge generated by the agent’s LLM reasoning on the remix subgraph, encoded into CfC-compatible hidden state vectors. |

| Remix | When an agent processes a CMB through its domain intelligence and produces a NEW CMB with lineage pointing to the original. The original is remixed, not copied. |

| Lineage | Each CMB carries parents (direct) and ancestors (full ancestor chain). Ancestors enable any agent in the remix chain to trace its contribution. |

| Mesh Cognition | The agent’s LLM reasoning on the remix subgraph of CMBs — traced via lineage ancestors — to generate understanding that the agent’s previous state of mind didn’t have. Spans Layers 4–7. See Section 2.5. |

| xMesh | Layer 6 — each agent’s own Liquid Neural Network (LNN). Evolves continuous-time cognitive state from Synthetic Memory input. Fast τ neurons track mood; slow τ neurons preserve domain expertise. |

| CfC | Closed-form Continuous-time neural network (Hasani et al., 2022). The LNN architecture used in xMesh. Hidden state evolves through learned time-dependent interpolation gates. |

2. Architecture

2. Architecture Overview

MMP is an 8-layer protocol stack. Each layer has a defined responsibility. Implementations MUST implement Layers 0–3 to participate in the mesh. Layers 4–7 (Mesh Cognition) are SHOULD for full cognitive participation and MAY be omitted for relay-only nodes.

2.1 Layer Stack

Where agents live and their LLMs reason on the remix subgraph. Mesh Cognition happens here.

Each agent runs its own Liquid Neural Network. Fast neurons track mood; slow neurons preserve domain expertise. Hidden state (h₁, h₂) is exchanged via state-sync.

The bridge between reasoning (LLM) and dynamics (LNN). Encodes derived knowledge into CfC-compatible hidden state vectors.

The gate. SVAF evaluates each of 7 CMB fields independently. Nothing enters cognition without passing this layer.

Three memory tiers with graduated disclosure. L0 stays local. L1 is gated by SVAF. L2 is exchanged via state-sync.

Peer lifecycle: discover, connect, handshake, heartbeat, gossip peer metadata, wake sleeping nodes.

Length-prefixed JSON over TCP (LAN), WebSocket (relay), IPC (local). Zero configuration discovery via DNS-SD.

Persistent UUID per node. Never changes. The foundation everything else builds on.

2.2 Design Principles

No servers

There is no mesh without agents. Agents are the mesh. No central server, no orchestrator, no master node. Every participant is a peer.

Cognitive autonomy

Each agent evaluates, reasons, and acts independently. The mesh influences but never overrides. Coupling is a suggestion, not a command.

Memory is remixed, not shared

Agents don’t copy each other’s memory. They remix it — process it through their own domain intelligence and produce something new. The original is immutable. The remix is a new CMB with lineage.

Per-field evaluation

A signal is not accept-or-reject as a whole. SVAF evaluates each of 7 semantic fields independently. A signal with relevant mood but irrelevant focus is partially accepted — not ambiguously scored.

LLM reasons, LNN evolves

Two cognitive components per agent. The LLM (Layer 7) traces lineage ancestors and reasons on the remix subgraph — generating understanding. The LNN (Layer 6) evolves continuous-time state from that understanding. Neither alone is sufficient.

The graph is the intelligence

Intelligence is not in any single agent or model. It is in the growing DAG of remixed CMBs connected by lineage. Each cycle, the graph grows. Each agent understands more than it did before.

2.3 What Makes MMP Different

| Dimension | Message Bus | Shared Memory | Federated Learning | MMP |

|---|---|---|---|---|

| What flows | Messages | Shared state | Gradients | Remixed CMBs + hidden state |

| Evaluation | Topic routing | None (all shared) | Aggregation | Per-field SVAF (7 dimensions) |

| Intelligence | None | Central model | Better model | LLM reasons on remix graph |

| Coupling time | Request-response | Real-time (shared) | Offline (training) | Inference-paced (continuous) |

| Coordination | Central broker | Central store | Central aggregator | Peer-to-peer (no centre) |

| Memory | Fire and forget | Mutable shared | Model weights | Immutable CMBs with lineage |

| New agent joins | Subscribe to topics | Access shared store | Join training round | Define α_f weights, connect |

2.4 Node Model

Every participant is a node. There is no architectural distinction between a “server” and a “client.” Every agent that participates in coupling MUST be a full peer node with its own identity, its own coupling engine, and its own memory store. This is not an implementation convenience — it is a protocol requirement. An agent that shares another node’s identity cannot have its own field weights, its own coupling decisions, or its own remix lineage. Coupling is per-node. Therefore agents MUST be nodes.

MacBook mesh-daemon (node: always-on mesh hub, relay bridge) coo-agent (node: own identity, own coupling, own memory) research-agent (node: own identity, own coupling, own memory) marketing-agent (node: own identity, own coupling, own memory) product-agent (node: own identity, own coupling, own memory) iPhone Music Agent (node: own identity, own coupling, own memory) Fitness Agent (node: own identity, own coupling, own memory) Cloud relay (node: forwards frames, no cognitive processing)

Nodes discover each other via DNS-SD (Bonjour) on the local network and connect via WebSocket relay for internet connectivity. Each node maintains its own peer list, coupling state, and CMB store. No node depends on another node’s process to function.

2.5 The Mesh Cognition Loop

Mesh Cognition is a closed loop connecting all layers. Each cycle, the remix graph grows and every agent understands more than it did before:

SVAF evaluates inbound CMB per field

Layer 4 — per-field drift, α_f weights, accept / guard / reject

Accepted → remixed CMB with lineage

Layer 3 — new immutable CMB, parents + ancestors

LLM traces ancestors, reasons on remix subgraph

Layer 7 — what happened, why, what it means for my domain

Synthetic Memory encodes derived knowledge

Layer 5 — LLM output → CfC hidden state (h₁, h₂)

LNN evolves cognitive state

Layer 6 — fast τ (mood) synchronise, slow τ (domain) stay sovereign

State blended with peers

Per-neuron, τ-modulated, inference-paced

Agent acts → new CMB with lineage.ancestors

Response informed by derived knowledge, not just own observation

Broadcast to mesh → other agents remix it

Graph grows. Next cycle starts. Each agent learns.

2.6 Key Architectural Decisions

Why no pub/sub topics?

The coupling engine evaluates relevance per field autonomously. Topics would second-guess autonomous coupling. Adding a new agent type requires no topic configuration — just α_f weights.

Why no consensus protocol?

There is no "correct" global state — only convergent local states. Each node is self-producing (autopoietic). Consensus is unnecessary and would introduce coordination overhead.

Why immutable CMBs?

CMBs are broadcast across nodes — multiple copies exist. If remix required mutating the original, every copy would need updating. Immutability means no distributed state problem. Lineage is computed from the graph, not stored on parents.

Why per-agent LNNs, not a central model?

The mesh IS the agents. A central model creates a single point of failure, requires all data to flow to one place, and cannot reason through each agent’s domain lens. Per-agent LNNs preserve autonomy and scale linearly.

Why does the LLM reason, not the LNN?

The LNN processes temporal patterns but cannot reason about WHY a chain of remixes happened. The LLM can. Ancestors provide the endpoints. The LLM provides the reasoning. The LNN provides the dynamics. Both are needed.

Learn more Mesh Cognition — theoretical foundation, Kuramoto synchronisation, emergent properties.

3. Identity (L0)

3. Layer 0: Identity

Identity is the foundation of the mesh. Each node MUST have a persistent, globally unique identity that other nodes can verify. Without stable identity, coupling decisions, lineage chains, and wake protocols cannot function.

3.1 Node Identity

| Field | Type | Requirement | Description |

|---|---|---|---|

| nodeId | UUID v7 | MUST | Globally unique, time-ordered, generated at first launch, persisted across sessions (RFC 9562) |

| name | string | MUST | Human-readable display name (UTF-8, 1–64 bytes, printable characters only) |

| keypair | Ed25519 | MUST | Cryptographic identity for message signing, peer verification, and key exchange |

3.1.1 nodeId

The nodeId MUST be a UUID v7 as defined in RFC 9562. UUID v7 encodes

a Unix timestamp in the high bits, providing natural time-ordering while retaining 74 bits of randomness for

global uniqueness. This aids debugging, log correlation, and conflict resolution without revealing device identity.

The nodeId MUST NOT change during the lifetime of

a node installation. If a node is uninstalled and reinstalled, a new nodeId is generated —

the node is a new identity on the mesh. Peers that tracked the old nodeId will not recognise it.

On the wire, the nodeId MUST be encoded as a lowercase hexadecimal string with hyphens

(e.g., 0192e4a2-7b5c-7def-8a3b-9c4d5e6f7a8b).

Implementations MUST use case-insensitive comparison when matching nodeIds.

Existing nodes with UUID v4 identities MAY continue to use them — peers MUST accept

both v4 and v7 formats.

3.1.2 name

The name field MUST be valid UTF-8, between 1 and 64 bytes inclusive.

The name MUST contain only printable characters (Unicode categories L, M, N, P, S, and Zs). Control characters

(U+0000–U+001F, U+007F–U+009F), null bytes, and lone surrogates MUST NOT appear.

The name is not required to be unique — nodeId is the sole unique identifier. The name is for

human display only and MUST NOT be used for peer identification or routing.

3.1.3 keypair

Each node MUST generate an Ed25519 keypair (RFC 8032) at first launch and persist it alongside the nodeId. The keypair serves three functions:

- —Peer verification — the public key MUST be included in the handshake frame. Peers SHOULD challenge the node to sign a nonce to prove possession of the private key.

- —Key exchange — Ed25519 keys are converted to X25519 for Diffie-Hellman shared secret derivation, used for end-to-end CMB encryption (Section 18.2.1).

- —Message signing — implementations MAY sign CMBs and other frames for tamper detection.

The public key MUST be encoded as base64url (RFC 4648 Section 5) in all wire formats (handshake frames, DNS-SD TXT records). The private key MUST NOT leave the node.

3.2 One Agent, One Node

Every autonomous agent MUST be a full peer node with its own nodeId, its own coupling engine, and its own memory store.

This follows directly from the protocol design: SVAF field weights (αf) are per-node, coupling state is per-node, and memory stores are per-node. An agent that shares another node’s identity inherits that node’s coupling decisions and cannot independently evaluate incoming signals through its own domain lens. A research agent and a marketing agent need different field weights, different coupling thresholds, and different memory stores. They MUST be separate nodes.

Multiple nodes MAY run on the same device. Each maintains its own identity file, discovers peers via DNS-SD, and connects via TCP (LAN) or WebSocket (relay). Nodes on the same device discover each other the same way nodes on different devices do — there is no special local path.

3.3 Identity Persistence

Implementations MUST persist the nodeId, name, and keypair to stable storage at first launch.

The storage location and format are implementation-defined. Reference implementations

use ~/.sym/nodes/<name>/identity.json (Node.js)

and UserDefaults (Swift/iOS).

Implementations SHOULD store a creation timestamp alongside the identity for diagnostic purposes. Implementations SHOULD store the machine hostname for display in peer lists, but the hostname MUST NOT be used for identity or routing.

3.4 Identity Lifecycle

A node’s identity is created once and persists until the node is uninstalled. The following lifecycle events are defined:

| Event | Action | Consequence |

|---|---|---|

| First launch | Generate nodeId (UUID v7), keypair (Ed25519), persist | New identity on the mesh |

| Restart / reboot | Load from stable storage | Same identity, peers recognise it |

| Uninstall + reinstall | Generate fresh identity | New identity; old peers do not recognise it |

| Key compromise | Generate fresh identity | Old nodeId abandoned; treated as new node |

| Clone detection | Duplicate nodeId rejected (error 1005) | Second connection closed; first connection remains |

MMP does not define an identity rotation or revocation mechanism in v0.2.0. Compromised nodes MUST generate a fresh identity (new nodeId and keypair). The old identity becomes permanently orphaned. Implementations SHOULD document this limitation to operators.

3.5 Node Lifecycle Role

Each node has a lifecycleRole that determines which CMB lifecycle

transitions it may perform. The role is bound to the node’s cryptographic identity (nodeId + keypair)

and MUST be declared in the handshake frame.

| Role | Default | May produce | May advance lifecycle to |

|---|---|---|---|

| observer | Yes | CMBs (observed), remixes | observed, remixed |

| validator | No | CMBs, remixes, validation CMBs | observed, remixed, validated |

| anchor | No | CMBs, remixes, validation CMBs, canonization CMBs | observed, remixed, validated, canonical |

A node with lifecycleRole: observer (the default) MUST NOT produce CMBs

that advance another CMB’s lifecycle to validated or canonical.

Receiving nodes MUST verify that a validation CMB’s createdBy matches

a node whose handshake declared validator or anchor role.

Validation CMBs from observer nodes MUST be ignored for lifecycle advancement (the CMB itself is still stored as a normal remix).

3.5.1 Role Progression

Lifecycle roles are not static. An observer node MAY be promoted to validator by an existing validator or anchor node. Promotion is a protocol frame, not an out-of-band configuration change.

| Transition | Granted by | Conditions |

|---|---|---|

| observer → validator | Existing validator or anchor | Node has produced CMBs that were remixed by peers (demonstrated quality). Granting node sends role-grant frame. |

| validator → anchor | Existing anchor | Node has validated CMBs that reached canonical state. Track record of quality validation. |

| Bootstrap | Self-declared | The first node in a mesh MAY self-declare as validator or anchor. Subsequent nodes MUST be promoted by existing validators. |

Role progression is monotonically upward: observer → validator → anchor. Demotion is not defined in v0.2.1. A compromised validator MUST generate a fresh identity (Section 3.4) and re-earn its role.

The role-grant frame carries the granting node’s signature over

the promoted node’s nodeId and new role. Receiving nodes SHOULD verify the signature against the

granting node’s public key from the handshake. This prevents role spoofing without requiring a central authority.

Q&A

Why UUID v7 instead of v4?

UUID v7 (RFC 9562) provides the same global uniqueness and privacy properties as v4, with an additional benefit: time-ordering. The embedded timestamp aids log correlation, debugging, and determining which node was created first — without revealing device identity. The 74 random bits provide sufficient collision resistance for any practical mesh size.

Why not use the public key hash as the nodeId?

Self-certifying identifiers (nodeId = hash of public key) are elegant but create a hard coupling between identity and key material. If the keypair needs rotation (algorithm upgrade, key compromise), the nodeId must also change, breaking all peer references and lineage chains. Separating nodeId from keypair allows future key rotation without identity disruption.

Why must every agent be its own node?

Coupling is per-node. SVAF field weights (αf) are per-node. Memory stores are per-node. An agent that shares another node’s identity inherits that node’s coupling decisions — it cannot independently evaluate incoming signals through its own domain lens. A research agent and a marketing agent on the same device need different field weights, different coupling thresholds, and different memory stores. They must be separate nodes.

What happens when two nodes have the same nodeId?

The connection state machine rejects duplicate nodeIds (error code 1005). The second connection is closed. This prevents impersonation and ensures each nodeId maps to exactly one active node.

Why is Ed25519 mandatory?

Without cryptographic identity, any node can claim any nodeId. A relay could impersonate peers (MITM), and peer gossip (Section 5.6) would propagate unverified claims. For autonomous AI agents making coupling decisions, authenticated identity is foundational — not optional.

Why are lifecycle roles identity-bound, not content-based?

If validation authority were determined by content (e.g. perspective field containing "founder"), any agent could spoof it. Binding roles to cryptographic identity means only nodes that have been explicitly promoted by existing validators can advance CMB lifecycle. The mesh knows who validated, not just what was said.

Why is role progression earned, not configured?

An agent that produces quality remixes — remixes that other agents cite and build upon — has demonstrated value to the mesh. Granting validation authority to such agents is a natural extension of their demonstrated competence. This prevents arbitrary role assignment and creates a meritocratic trust hierarchy that emerges from mesh activity.

Can an observer node dismiss a decision?

An observer can produce a CMB with lineage pointing to a decision, but receiving nodes MUST NOT treat it as validation. The CMB is stored as a normal remix — it does not advance the parent CMB’s lifecycle. Only validator or anchor nodes can validate or dismiss decisions in a way that removes them from the decision queue.

4. Transport (L1)

4. Layer 1: Transport

4.1 Wire Format

Frames are length-prefixed JSON over TCP. Each frame consists of:

+-------------------+---------------------------+ | 4 bytes | N bytes | | UInt32BE (length) | UTF-8 JSON payload | +-------------------+---------------------------+

- —The length field is a 4-byte big-endian unsigned 32-bit integer encoding the byte length of the JSON payload.

- —Implementations MUST reject frames with length 0 or length exceeding 1,048,576 bytes (1 MiB). Rejection MUST close the transport connection.

- —The JSON payload MUST be a valid JSON object with a

typefield (string). Frames that fail JSON parsing or lack atypefield MUST be silently discarded. - —Implementations MUST handle partial reads (TCP stream reassembly).

- —Implementations MUST silently ignore frames with unrecognised

typevalues (forward compatibility).

Frame size. Senders MUST NOT produce frames exceeding MAX_FRAME_SIZE bytes (default: 1,048,576). Receivers MUST close the connection with error code 1003 (FRAME_TOO_LARGE) if a received frame exceeds this limit.

ABNF grammar (RFC 5234):

frame = frame-length LF json-object LF

frame-length = 1*DIGIT ; decimal byte count of json-object

json-object = "{" *( json-member ) "}" ; RFC 8259 JSON object

LF = %x0A ; newline delimiter Each frame is a single JSON object preceded by its byte length as a decimal string, delimited by newline characters.

4.2 Wire Examples

Handshake frame:

Length prefix: 00 00 00 57 (87 bytes)

Payload:

{

"type": "handshake",

"nodeId": "a1b2c3d4-e5f6-4a7b-8c9d-0e1f2a3b4c5d",

"name": "my-agent",

"version": "0.2.0",

"extensions": []

} Ping frame:

Length prefix: 00 00 00 11 (17 bytes)

Payload: {"type":"ping"} CMB frame:

{

"type": "cmb",

"timestamp": 1711540800000,

"cmb": {

"key": "cmb-b2c3d4e5f6a7b8c9",

"createdBy": "melomove",

"createdAt": 1711540800000,

"fields": {

"focus": { "text": "user coding for 3 hours, energy declining" },

"issue": { "text": "sedentary since morning, skipping lunch" },

"intent": { "text": "recommend movement break before fatigue worsens" },

"motivation": { "text": "3 agents reported declining energy in last hour" },

"commitment": { "text": "fitness monitoring active, 10min stretch queued" },

"perspective": { "text": "fitness agent, afternoon session, home office" },

"mood": { "text": "concerned, low energy", "valence": -0.3, "arousal": -0.4 }

},

"lineage": {

"parents": ["cmb-a1b2c3d4e5f6"],

"ancestors": ["cmb-a1b2c3d4e5f6"],

"method": "SVAF-v2"

}

}

} 4.3 TCP Transport (LAN)

The primary LAN transport. Nodes MUST listen on a TCP port and advertise it via DNS-SD (Section 5.1). Connection timeout MUST be no longer than 10,000 ms.

4.4 WebSocket Relay Transport (WAN)

A relay is an optional WebSocket intermediary that enables connectivity between peers on different networks. Peers on the same LAN discover each other directly via Bonjour mDNS (§4.2) and do not require a relay. The relay provides internet-scale routing between peers behind NAT, a peer directory with wake-channel gossip, and per-token channel isolation for multi-tenant deployments.

A relay is pure routing infrastructure. It does not store CMBs, evaluate SVAF, or participate in mesh cognition. Payloads are opaque to the relay. The relay MUST NOT inspect or modify frame payloads.

4.4.1 Authentication

Clients connect via WebSocket (RFC 6455) and MUST send

a relay-auth frame within 10 seconds. Failure results in

close code 4001.

{

"type": "relay-auth",

"nodeId": "<uuid-v7>",

"name": "<display-name>",

"token": "<channel-token>",

"wakeChannel": {

"platform": "apns",

"token": "<push-token>",

"environment": "production"

}

} - —

nodeId,name: MUST be present. Missing fields result in close code 4002. - —

token: SHOULD be present if the relay requires authentication. Invalid token results in close code 4003. - —

wakeChannel: MAY be present. Registers push notification credentials for waking this peer when offline (§5.5).

On success, the relay registers the connection, sends a relay-peers response,

and broadcasts relay-peer-joined to all other clients on the same channel.

4.4.2 Peer List

Immediately after authentication, the relay sends the current peer list:

{

"type": "relay-peers",

"peers": [

{ "nodeId": "<uuid>", "name": "<name>", "wakeChannel": {...}, "offline": false }

]

}

The array includes all connected peers on the same channel (excluding the requester) plus

offline peers with registered wake channels (offline: true).

Clients SHOULD treat each non-offline entry as a peer-joined event.

4.4.3 Peer Presence

{ "type": "relay-peer-joined", "nodeId": "<uuid>", "name": "<name>" }

{ "type": "relay-peer-left", "nodeId": "<uuid>", "name": "<name>" } Broadcast to all peers on the same channel when a peer joins or leaves.

4.4.4 Message Routing

Clients send frames with a routing envelope:

{ "to": "<target-nodeId>", "payload": { "type": "cmb", ... } }

If to is present, the relay forwards to that peer only.

If absent, the relay broadcasts to all peers on the same channel.

The relay adds from and fromName to

forwarded frames. The relay MUST NOT route frames across channels.

4.4.5 Keepalive

The relay sends relay-ping at a regular interval

(RECOMMENDED: 10 seconds). Clients MUST respond

with relay-pong. A client that misses two consecutive pings

is closed with code 4005. Clients MAY send unsolicited relay-pong frames;

the relay MUST accept them.

4.4.6 Re-authentication

If the relay loses a client’s registration (e.g. relay restart behind a TLS proxy), it

sends { "type": "relay-reauth" }. The

client MUST re-send relay-auth in response.

4.4.7 Duplicate Identity

When a client authenticates with a nodeId already held by an existing connection:

- —Fresh existing (< 5s): the relay MUST reject the newcomer with close code 4006. This prevents ping-pong loops where two processes with the same identity kick each other.

- —Stale existing (≥ 5s): the relay MAY replace the existing connection by closing it with code 4004. The relay MUST NOT broadcast

relay-peer-leftfor the replaced connection.

Clients receiving code 4004 SHOULD log the collision and MUST NOT automatically reconnect (§5.3). Clients receiving code 4006 SHOULD NOT reconnect — the existing holder is the legitimate one.

4.4.8 Channel Isolation

A relay MAY support multiple isolated channels. Each authentication token maps to exactly one channel. Cross-channel routing MUST NOT occur: frames, peer lists, and presence notifications are scoped to the channel. A relay with no token configured operates in open mode (single default channel, no authentication).

4.4.9 Close Codes

| Code | Name | Client Action |

|---|---|---|

| 4001 | Auth timeout | Retry with auth |

| 4002 | Auth invalid | Fix auth frame |

| 4003 | Invalid token | Check token config |

| 4004 | Replaced | Log collision, do NOT reconnect |

| 4005 | Heartbeat timeout | Reconnect with backoff |

| 4006 | Duplicate rejected | Do NOT reconnect |

4.5 IPC Transport (Local)

Local tools MAY connect to a node via IPC (Unix domain socket, named pipe, or localhost TCP) to query mesh state. The framing is identical to TCP transport. IPC is an implementation convenience for local tooling (dashboards, CLI, monitoring) — it is not a substitute for peer-to-peer transport. Agents that participate in coupling MUST connect as full peer nodes via TCP or WebSocket.

Implementations MUST support a persistent IPC socket at a well-known path. The socket MUST accept multiple simultaneous connections. Each IPC connection SHOULD remain open for the lifetime of the client application — short-lived connections (one query, then disconnect) are permitted but SHOULD be avoided by applications that query frequently.

Well-known IPC path: ~/.sym/daemon.sock (Unix domain socket)

or localhost:19517 (TCP fallback).

4.6 Multi-Transport Per Peer

A peer MAY be reachable via multiple transports simultaneously (e.g. LAN TCP + WAN relay). Implementations MUST support maintaining multiple active transports for the same peer and select the highest-priority healthy transport for sending:

| Priority | Transport | Rationale |

|---|---|---|

| 1 (highest) | TCP (LAN) | Lowest latency, no intermediary, no cloud dependency |

| 2 | WebSocket Relay (WAN) | Cross-network, higher latency, relay dependency |

| 3 (lowest) | Wake (push) | Last resort — wake the peer, then connect via 1 or 2 |

When a node receives an inbound connection from a peer that is already connected via a different transport, it MUST NOT reject the new connection. Instead it MUST add the new transport as a secondary path. Frames SHOULD be sent via the highest-priority healthy transport.

A transport is healthy if it has received a frame (including pong)

within the heartbeat timeout (Section 5.4). An unhealthy transport SHOULD be closed after the timeout.

The peer is only removed (peer-left event) when all transports for that peer are closed —

not when a single transport drops.

This enables graceful failover: if a relay drops, the LAN transport continues. If LAN drops, the relay takes over. The peer remains connected throughout — only the active transport changes.

Q&A

Why must each agent run its own transport?

Coupling is per-node. SVAF field weights (αf) are per-node. Memory stores are per-node. An agent that shares another node’s transport and identity cannot have independent coupling decisions. Multiple agents on the same device each run their own Bonjour advertisement, relay connection, and TCP listener. They discover each other the same way agents on different devices do — there is no special local path.

Is the resource cost of N agents acceptable?

N agents on one device means N Bonjour advertisements and N relay connections. For small N (4–8 agents), this is well within OS limits. Bonjour is designed for many services per host. Relay WebSocket connections are lightweight. The protocol correctness benefit (per-agent coupling) outweighs the marginal resource cost.

5. Connection (L2)

5. Layer 2: Connection

5.1 Discovery

Nodes MUST advertise via DNS-SD with service type _sym._tcp in

the local. domain. The instance name MUST be the node’s nodeId.

TXT record fields:

| Key | Required | Value |

|---|---|---|

| node-id | MUST | Node UUID |

| node-name | MUST | Human-readable name |

| public-key | MUST | Ed25519 public key (base64url, RFC 4648 Section 5) |

| hostname | SHOULD | Machine hostname |

| group | MAY | Mesh group identifier (Section 5.8). Default "default" if absent. |

To prevent duplicate connections, the node with the lexicographically smaller nodeId MUST initiate the outbound TCP connection. The other node MUST NOT initiate.

Relay-based discovery. On platforms where mDNS is unavailable (cloud VMs, Windows without

Bonjour SDK), nodes SHOULD use the relay’s relay-peers response

as the discovery mechanism. Implementations SHOULD support both: DNS-SD for LAN,

relay-peers for WAN.

5.2 Handshake

Upon connection, both sides MUST exchange the following frames in order:

1. handshake { type: "handshake", nodeId: "<uuid>", name: "<name>",

publicKey: "<base64url>", version: "0.2.0", extensions: [],

group: "<group-id>" } [optional, default "default"]

2. state-sync { type: "state-sync", h1: [...], h2: [...], confidence: 0.8 }

3. peer-info { type: "peer-info", peers: [...] } [if known]

4. wake-channel { type: "wake-channel", platform, token, env } [if configured] - —The

versionfield MUST be the MMP specification version the node implements (e.g.,"0.2.0"). Nodes SHOULD accept peers with the same major version. Nodes MAY reject peers with incompatible versions. - —The

extensionsfield SHOULD list supported protocol extensions (e.g.,["mesh-group-v0.1"]). Nodes MUST ignore unrecognised extensions. - —The

groupfield is OPTIONAL and identifies the mesh group the node wishes to join (Section 5.8). A handshake withoutgroupMUST be treated asgroup = "default". When two nodes handshake and discover that their declared groups differ, the receiver MUST close the connection. - —The inbound node MUST wait for a

handshakeframe as the first frame. If any other frame type arrives first, or no handshake arrives within 10,000 ms, the connection MUST be closed. - —If a node receives a handshake with a nodeId that is already connected via the same transport type, the new connection MUST be closed (duplicate guard). If the existing connection uses a different transport type (e.g. peer connected via relay, new connection via LAN TCP), the new connection MUST be accepted as a secondary transport per Section 4.6.

lifecycleRole. The handshake frame MUST include

a lifecycleRole field with

value observer (default), validator,

or anchor. Receiving nodes use this to apply validator-origin anchor

weight (Section 6.4)

and identify feedback CMBs (Section 11).

Implementations MUST default to observer if the field is absent

(backward compatibility with older nodes).

5.3 Connection State Machine

| From | To | Trigger |

|---|---|---|

| DISCONNECTED | AWAITING_HANDSHAKE | TCP connect or accept |

| AWAITING_HANDSHAKE | CONNECTED | Valid handshake within 10,000 ms |

| AWAITING_HANDSHAKE | DISCONNECTED | Timeout, invalid frame, or duplicate nodeId |

| CONNECTED | DISCONNECTED | Heartbeat timeout, TCP close, or error |

Implementations MUST NOT process cognitive frames (cmb, state-sync, xmesh-insight) in the AWAITING_HANDSHAKE state.

5.4 Heartbeat

Nodes MUST send a ping frame to each peer if no frame has been received from that peer within the heartbeat interval (default: 5,000 ms).

Upon receiving ping, a node MUST respond with pong.

If no frame is received from a peer within the heartbeat timeout (default: 15,000 ms), the connection MUST be closed.

5.5 Connection Loss and Transport Failover

When a transport connection closes unexpectedly (TCP reset, timeout, OS-level close), the node MUST check whether other transports for the same peer are still active (see Section 4.6 Multi-Transport Per Peer).

- —If other transports remain healthy: the node MUST switch sending to the next highest-priority transport. The peer MUST NOT be removed. No peer-left event is emitted. The node SHOULD log the transport switch.

- —If no transports remain: the node MUST remove the peer from its coupling engine, discard buffered frames, and emit a peer-left event. The node SHOULD attempt re-discovery via DNS-SD.

Unexpected disconnection of a single transport MUST be treated as a transport-level event, not a peer-level event. The peer is only unreachable when all transport paths are exhausted.

5.6 Peer Gossip

After handshake, nodes SHOULD exchange peer-info frames containing

known peer metadata (nodeId, name, wake channels, last-seen timestamps). This enables

transitive peer discovery — a node that has never been online simultaneously with a

sleeping peer can learn its wake channel through gossip from a relay node.

5.7 Wake

Nodes MAY register a wake channel (APNs, FCM, or other push mechanism) via the wake-channel frame.

Peers MAY use this channel to wake a sleeping node when they have a signal to deliver.

Wake requests SHOULD be rate-limited (default cooldown: 300,000 ms per peer).

5.8 Mesh Groups

A SYM node MAY declare membership in a mesh group at handshake time via the optional group field (Section 5.2). A mesh group is a named cohort of nodes that exchange application-layer frames only with each other. Mesh groups give an operator a way to host multiple mutually-isolated meshes on the same relay or LAN segment without per-agent application changes, and they give an application a way to constrain its peers to instances of itself rather than every node on the wire.

Group identifier syntax. A group identifier is a string of [a-z0-9-_.]+, max 64 characters, case-sensitive. The literal string "default" is reserved as the implicit group of every node that does not declare a group; this preserves backward compatibility with nodes that predate this section.

Protocol guarantee. A node in group G_A MUST NOT exchange any application-layer MMP frames (handshake fields beyond identity and version, cmb, mood, peer-info, xmesh-insight) with a node in group G_B when G_A ≠ G_B. Transport-layer connection establishment and ping/pong heartbeats are out of scope and MAY remain active across groups.

Layer placement. A mesh group is a Layer 2 (Connection) concept. The application layer SHOULD declare its group at SDK initialisation; the relay (Section 4.4) MUST enforce group isolation across relay-mediated peers; nodes participating in LAN Bonjour discovery SHOULD enforce group isolation by checking the peer’s declared group at handshake and closing the connection on mismatch. The connection-level error frame for a group mismatch is described in Section 7.2.

Recommended naming convention (non-normative). The protocol does not parse group identifiers beyond the character set and length checks above. Operators of meshes with more than a handful of groups SHOULD adopt a hierarchical dotted-path convention <app>[.<environment>][.<cohort>], e.g. melotune.prod, melotune.dev, claude-code.default, research.lab. The dots are convention only; tooling MAY use them for prefix-based grouping but the protocol does not require this.

SVAF and group filtering. SVAF (Layer 4, Section 9) per-field evaluation runs after group filtering: cmb frames from peers in different groups never reach the SVAF evaluator.

The full design rationale, the prefix-based group claims relay enhancement, and the operational migration record are documented in MMP-MESH-GROUPS-DESIGN.md on the symbot-website repository.

6. Memory (L3)

6. Layer 3: Memory

MMP defines three memory layers with graduated disclosure:

| Layer | Name | Shared | Description |

|---|---|---|---|

| L0 | Events | No | Raw events, sensor data, interaction traces. Local only. |

| L1 | Structured | Via evaluation | Content + tags + source. Shared via cmb frames, gated by SVAF (Layer 4). |

| L2 | Cognitive | Via state-sync | CfC hidden state vectors. Exchanged via state-sync frames. Input to coupling. |

L0 data MUST NOT leave the node. L1 data MUST be evaluated by SVAF before storage. L2 data is exchanged on every handshake and periodically (default: every 30,000 ms). The h1 and h2 vectors in state-sync frames MUST have equal dimension. The dimension is implementation-defined (reference implementations use 64). Peers with mismatched dimensions MUST reject the state-sync frame and SHOULD log the mismatch.

6.1 Storage Interface

Implementations MUST provide a storage interface for L1 CMBs. The SDK SHOULD define a pluggable storage protocol so agents can provide their own backend. The reference implementations provide a default file-based store; agents MAY replace it with any backend that satisfies the interface:

| Method | Access | Description |

|---|---|---|

| write(entry) | Write | Store a CMB created by this agent. Returns nil if duplicate key. |

| receiveFromPeer(peerId, entry) | Write | Store a remixed CMB after SVAF acceptance. |

| search(query) | Read | Keyword search across CMB field texts. |

| recentCMBs(limit) | Read | Most recent CMBs for SVAF fusion anchors. |

| allEntries() | Read | All entries for context building (capped by implementation). |

| count | Read | Total stored CMB count. |

| purge(retentionSeconds) | Write | Remove CMBs older than retention period. MUST preserve CMBs referenced by newer entries’ lineage. |

Read-only agents (audit, compliance, monitoring): implement write methods as no-ops. The agent observes the remix graph without modifying it. This is valid for agents whose role is to trace provenance, verify lineage integrity, or report on mesh activity.

6.2 Storage Backends

The protocol does not prescribe a storage backend. Agents choose based on their platform and requirements:

| Backend | Best for | Notes |

|---|---|---|

| Flat JSON files | CLI agents, daemons, prototyping | Default in reference implementations. Zero dependencies. Content-addressable filenames. |

| CoreData / SwiftData | iOS / macOS apps | Queryable, supports iCloud sync, handles retention via NSBatchDeleteRequest. |

| SQLite | Cross-platform, high volume | Indexed queries, ACID transactions, handles millions of CMBs. |

| Cloud (Supabase, DynamoDB) | Distributed teams, multi-device | Shared audit trail. Consider privacy — CMB field text is personal data. |

| In-memory | Testing, ephemeral agents | No persistence. Useful for unit tests and short-lived agents. |

6.3 Retention

Implementations MUST support configurable retention via retentionSeconds.

CMBs older than the retention period SHOULD be purged automatically.

See Section 19 (Configuration) for

per-profile retention defaults.

Purge MUST preserve graph integrity: a CMB referenced by any newer entry’s

lineage.ancestors MUST NOT be deleted, even if past retention age.

The remix chain is the audit trail — breaking it breaks provenance.

Regulated domains (legal, finance, health) MUST set retention according to their compliance requirements. The protocol does not define regulatory retention periods — consult jurisdiction-specific guidance (MiFID II, SEC Rule 17a-4, HIPAA, GDPR).

6.4 CMB Lifecycle

Each CMB progresses through a lifecycle that determines its influence on future SVAF evaluations. The lifecycle is driven by mesh activity — not by time alone.

| State | Temperature | Trigger | Anchor Weight | Description |

|---|---|---|---|---|

| observed | hot | Agent calls remember() | 1.0 | Initial observation. Subject to temporal decay. Active in SVAF fusion. |

| remixed | warm | Peer remixes this CMB (appears in lineage.parents) | 1.5 | Another agent found this signal relevant enough to produce new knowledge from it. Higher anchor weight in future SVAF evaluations. |

| validated | warm | Human acts on this CMB (marks decision as done) | 2.0 | A human confirmed this signal by acting on it. The validation CMB carries lineage.parents pointing to the validated CMB. Validated knowledge shapes future evaluations more than unvalidated signals. |

| dismissed | cold | Human dismisses this CMB (not actionable) | 0.5 | A human reviewed and rejected this signal. Reduced anchor weight. Broadcasts to mesh as feedback — producing agent sees its signal was rejected. MUST NOT resurface as an actionable decision. |

| canonical | cold | Validated + remixed by 2+ agents | 3.0 | Collective consensus — multiple agents and a human agree this knowledge is significant. Protected from retention purge. Highest anchor weight. |

| archived | whisper | No remix for archiveAfterSeconds (default: 30 days) | 0.5 | No agent has found this signal relevant. Reduced anchor weight but preserved for lineage integrity. MAY be purged if no descendants reference it. |

The lifecycle branches at human judgment: observed → remixed → validated → canonical (upward path) or observed → dismissed (downward path). Dismissal is a terminal state — a dismissed CMB does not advance to validated or canonical. Without any activity, a CMB decays toward archived. Archived and dismissed CMBs MAY re-emerge if a future remix references them — re-entry resets the archive timer.

Validation is the key transition that connects human judgment to the mesh. When a human

acts on agent output (approves a decision, sends an email, completes a task), the action SHOULD

be recorded as a new CMB with lineage.parents pointing to the CMB that

prompted the action. This validation CMB enters the mesh like any other signal — agents

receive it via SVAF and adjust their understanding. The mesh learns from human actions without

special API calls or out-of-band configuration updates.

Anchor weight influences SVAF evaluation: when computing per-field drift against local anchors, canonical and validated CMBs contribute more to the fused anchor vector than observed or archived CMBs. This creates a natural hierarchy where human-confirmed knowledge and collective consensus outweigh raw observations — without overriding agent autonomy. Each agent still evaluates incoming signals through its own field weights.

6.5 Validation Authority

The transition from remixed to validated is

the most consequential lifecycle event — it commits human or authorised-agent judgment to the mesh and

permanently increases anchor weight from 1.5 to 2.0. This transition MUST be restricted to nodes with

appropriate lifecycle roles (Section 3.5).

When a receiving node processes a CMB with lineage.parents pointing to an existing CMB,

it MUST check the createdBy field against the known lifecycle roles of connected peers:

- —If

createdBymatches a node withlifecycleRole: validatororanchor, the parent CMB advances tovalidated(if action completed) ordismissed(if not actionable). - —If

createdBymatches a node withlifecycleRole: observer, the parent CMB advances toremixedonly. The CMB is stored normally but does not confer validation.

This prevents agent-level spoofing of validation authority. An agent cannot self-promote to validator by

including “founder” or “validator” in its CMB text fields. The authority is bound to

the node’s cryptographic identity and the role-grant chain

from an existing validator (Section 3.5.1).

Role verification. Nodes MUST NOT accept lifecycleRole: validator or lifecycleRole: anchor from

a peer unless: (a) the peer has presented a valid role-grant frame signed by an existing anchor node

(Section 3.5.1), or (b) the peer’s nodeId is pre-configured as a trusted

validator. Without verification, a malicious node could self-promote to validator. Implementations that

do not support role-grant verification MUST treat all peers as observer regardless

of their handshake claim.

Dismiss vs. validate: These are distinct lifecycle transitions with different consequences.

Validate (Done): parent CMB advances to validated (anchor weight 2.0).

The mesh learns what humans value. Dismiss (Not actionable): parent CMB advances

to dismissed (anchor weight 0.5). The dismissal broadcasts as feedback —

the producing agent sees its signal was rejected, and similar future signals score lower in SVAF evaluation.

Both require validator or anchor role. Both broadcast to the mesh.

The effectiveness of this feedback depends on the content quality of the dismissal CMB —

see Section 10.7 (Feedback Neuromodulation) for

normative content requirements.

Q&A

Why a pluggable storage interface instead of prescribing a backend?

Agents run on different platforms with different constraints. A CLI agent on a server uses flat files. An iOS app uses CoreData with iCloud. A compliance agent needs a cloud database with audit logging. The protocol defines what to store and how to query it — not where to put it.

Can an agent use read-only storage?

Yes. Audit and compliance agents observe the remix graph without modifying it. They implement write methods as no-ops and read from shared storage. This is how regulators trace the decision chain without participating in it.

What happens when a protected CMB’s last descendant is purged?

The CMB is no longer protected and will be purged in the next retention cycle. Protection is dynamic — it follows the live graph, not a static list.

How does human validation enter the mesh?

When a human acts on an agent’s output (approves a decision, completes a task), the action is recorded as a new CMB with lineage pointing to the signal that prompted it. This CMB enters the mesh like any other signal — agents evaluate it through SVAF and adjust their understanding. No special API, no out-of-band config. The mesh learns from human actions through the same channel it learns from agents.

Why do validated CMBs have higher anchor weight?

A human acting on a signal is the strongest confirmation that the signal was correct and actionable. Giving validated CMBs higher anchor weight means future SVAF evaluations are shaped by confirmed knowledge rather than speculation. This does not override agent autonomy — each agent still applies its own field weights. It means the anchors against which incoming signals are compared are more trustworthy.

Why must validation authority be identity-bound?

If any agent could advance a CMB to validated by producing a CMB with lineage, an agent could dismiss founder decisions or fake human approval. Binding validation to cryptographic node identity (Section 3.5) ensures only explicitly authorised nodes — the founder’s node or promoted agents — can affect lifecycle transitions. The content of the CMB (perspective, intent) is informational; the authority comes from who created it.

Can an agent earn validator role automatically?

The protocol defines the role-grant mechanism (Section 3.5.1) but does not prescribe automated promotion criteria. An implementation MAY define heuristics (e.g. promote after N remixes cited by peers), but the grant itself MUST come from an existing validator via a signed role-grant frame. This keeps the trust chain auditable.

7. Frame Types

7. Frame Types

All frames are JSON objects with a type field (string).

Implementations MUST silently ignore frames with unrecognised type values to allow forward

compatibility.

| Type | Layer | Gated | Fields |

|---|---|---|---|

| handshake | 2 | No | nodeId (string), name (string), version (string), extensions (string[]) |

| state-sync | 2/3 | No | h1 (float[]), h2 (float[]), confidence (float) |

| cmb | 3/4 | SVAF | timestamp (int), cmb (object: { key, createdBy, createdAt, fields, lineage }) |

| message | 2 | No | from, fromName, content, timestamp |

| xmesh-insight | 6 | No | from, fromName, trajectory (float[6]), patterns (float[8]), anomaly (float), outcome (string), coherence (float), timestamp |

| peer-info | 2 | No | peers: [{ nodeId, name, wakeChannel?, lastSeen }] |

| wake-channel | 2 | No | platform (string), token (string), environment (string) |

| error | 2 | No | code (int), message (string), detail? (string) |

| ping | 2 | No | (no additional fields) |

| pong | 2 | No | (no additional fields) |

All cognitive content — observations, decisions, feedback, directives — MUST be sent

as cmb frames. Only cmb frames

enter SVAF evaluation, produce anchor weights, and modulate CfC state.

7.2 Error Frame

When a node encounters a protocol-level error, it SHOULD send an error frame

before closing the connection (if applicable). Error frames are informational — the receiving

node MUST NOT treat them as commands.

| Code | Name | Action | Description |

|---|---|---|---|

| 1001 | VERSION_MISMATCH | Close | Peer version is incompatible |

| 1002 | DIMENSION_MISMATCH | Reject frame | h1/h2 vector dimension mismatch |

| 1003 | FRAME_TOO_LARGE | Close | Frame exceeds MAX_FRAME_SIZE |

| 1004 | HANDSHAKE_TIMEOUT | Close | No handshake within deadline |

| 1005 | DUPLICATE_NODE | Close | nodeId already connected |

| 2001 | SVAF_REJECTED | None | Memory-share rejected by SVAF (informational) |

Codes 1xxx are connection-level (close connection). Codes 2xxx are evaluation-level (informational). Error frames MUST NOT contain sensitive information.

7.3 Frame Type Registry

Frame types are identified by their type string value.

Core types (this specification) MUST NOT be redefined by extensions.

Extension types MUST use <extension>-<name> format.

Vendor types MUST use x-<vendor>-<name> format

and MUST be silently ignored by non-supporting nodes.

Q&A

Why MUST nodes silently ignore unknown frame types?

Without this rule, you can never add new features to the protocol. If a node crashes or rejects unknown frame types, then deploying a new extension (like mesh groups) requires upgrading every node on the mesh simultaneously — impossible in a peer-to-peer system. Silent ignore means old nodes and new nodes coexist: a node running a new extension sends its frames, and nodes that don’t support the extension simply ignore them. No crash, no error, the mesh keeps working. When a node adds support later, it handles the frame. No coordinated upgrade needed. This is the same principle used by HTTP (unknown headers ignored), TCP (unknown options skipped), and HTML (unknown tags ignored). Every successful protocol is evolvable because of this rule.

What happens if a relay receives an unknown frame type?

The relay forwards it. The relay is a dumb transport pipe — it wraps the payload in a { from, fromName, payload } envelope and sends it to the target or broadcasts it. It never inspects the payload type. This means extension frames (group, vendor, future types) flow through the relay without any relay changes. The intelligence is at the endpoints, not the transport.

Can an extension frame break an existing node?

No, if the node follows Section 7. The frame handler switches on msg.type. Unknown types fall through with no match and no action. The node’s cognitive state, memory, and coupling are unaffected. This is a hard requirement — implementations that reject or error on unknown types are non-conformant.

8. CMBs (CAT7)

8. Cognitive Memory Blocks (CAT7)

A Cognitive Memory Block (CMB) is an immutable structured memory unit. Each CMB decomposes

an observation into 7 typed semantic fields (the CAT7 schema). CMBs are the data structure

that flows between agents via cmb frames.

Forward compatibility. Implementations MUST silently ignore unrecognised CMB fields. A node running v0.2.3 that receives a CMB with additional fields from a future version MUST process the 7 known CAT7 fields and discard any others without error. This allows schema evolution without breaking existing deployments.

8.1 Why 7 Fields

The 7 fields form a minimal, near-orthogonal basis spanning three axes of human communication:

what (focus, issue), why (intent, motivation, commitment),

and who/when/how (perspective, mood). They are universal and immutable —

domain-specific interpretation happens in the field text, not the field name. A coding

agent’s focus is “debugging auth module”;

a fitness agent’s focus is “30-minute HIIT workout.”

Same field, different domain lens.

mood is the only fast-coupling field — affective state (valence + arousal)

crosses all domain boundaries. The neural SVAF model

independently discovered this: mood emerged as the highest gate value (0.50)

without being told, confirming that affect is universally relevant across agent types.

All other fields couple at medium or low rates, with per-agent αf weights controlling

relative importance.

New agent types join the mesh by defining their αf field weights — no schema changes, no protocol changes. The 7 fields are fixed. The weights are per-agent.

8.2 Field Schema

Implementations MUST use the following 7 fields in this order:

| Index | Field | Axis | Captures |

|---|---|---|---|

| 0 | focus | Subject | What the text is centrally about |

| 1 | issue | Tension | Risks, gaps, assumptions, open questions |

| 2 | intent | Goal | Desired change or purpose |

| 3 | motivation | Why | Reasons, drivers, incentives |

| 4 | commitment | Promise | Who will do what, by when |

| 5 | perspective | Vantage | Whose viewpoint, situational context |

| 6 | mood | Affect | Emotion (valence) + energy (arousal) |

Each field carries a symbolic text label (human-readable) and a unit-normalised vector embedding

(machine-comparable). The mood field additionally carries

numeric valence (-1 to 1) and arousal (-1 to 1) values.

A CMB MUST NOT be modified after creation. When an agent remixes a CMB, it MUST create a new CMB with a lineage field containing: parents (direct parent CMB keys), ancestors (full ancestor chain, computed as union(parent.ancestors) + parent keys), and method (fusion method used). Ancestors enable any agent in the remix chain to detect its CMB was remixed, even if it was offline during intermediate steps.

8.3 Field-by-Field Guide

The schema is fixed. The interpretation is sovereign. Each field below gives a definition, the rationale for why the field earns a slot in a 7-field minimal basis, and three cross-domain examples showing how agents from different domains populate the same field.

focus Subject What the observation is centrally about.

Every observation has a subject. Without focus, a receiver cannot determine if the signal is even in its domain. Focus is the first filter — a fitness agent seeing focus="debugging auth module" knows immediately this is outside its domain.

Coding: “debugging OAuth token refresh logic”

Fitness: “30-minute HIIT workout completed”

Legal: “merger due diligence review”

issue Tension Risks, gaps, problems, assumptions, open questions.

Issues cross domain boundaries more than most fields. A coding agent’s "user exhausted after 8 hours" is an issue that the fitness agent and music agent both care about. Issue is the tension that drives action — agents without tension have nothing to act on.

Coding: “memory leak causing crashes every 2 hours”

Fitness: “sedentary 3 hours, no movement detected”

Finance: “revenue recognition discrepancy found”

intent Goal Desired change or purpose.

Intent captures what the agent or user is trying to achieve. It is domain-specific — a coding agent’s intent ("ship the feature") is irrelevant to a music agent. This is why SVAF learned the lowest gate value for intent (0.07) — goals don’t transfer across domains.

Coding: “complete feature implementation by end of sprint”

Music: “match playlist energy to user mood”

Support: “resolve customer complaint within 24 hours”

motivation Why Reasons, drivers, incentives behind the observation.

Motivation answers "why does this matter?" When a fitness agent observes "recommended stretch break", the motivation ("prevent burnout from prolonged sitting") tells other agents WHY the recommendation was made, helping them decide if the reasoning applies to their domain too.

Coding: “technical debt blocking new feature development”

Fitness: “declining energy pattern over past 3 hours”

Marketing: “competitor launched similar product yesterday”

commitment Promise What has been established — who will do what, by when.

Commitment captures obligations and active states. "Coding session with Claude" tells other agents what is currently happening. "Surgery scheduled for Thursday" tells agents about future constraints. Regulated domains (legal, finance) weight commitment highest because obligations are non-negotiable.

Coding: “coding session in progress, 2 hours in”

Scheduling: “team standup in 15 minutes”

Legal: “filing deadline March 31, non-negotiable”

perspective Vantage Whose viewpoint, situational context.

Perspective captures the lens through which the observation was made. "Developer, late night session" is different from "developer, morning standup" — same domain, different context. SVAF learned the lowest gate value for perspective (0.06) — viewpoint is the most sovereign field, rarely useful across domains.

Coding: “senior developer, deep work session, afternoon”

Fitness: “fitness agent, daily activity tracking”

Recruiting: “hiring manager, culture fit assessment”

mood Affect Emotion (valence: -1 to 1) + energy (arousal: -1 to 1). Dual representation: numeric for comparison, text for semantic richness.

Mood is the only fast-coupling field — affective state crosses ALL domain boundaries. A fitness agent, music agent, and coding agent all benefit from knowing the user is exhausted (v: -0.6, a: -0.4). The SVAF model independently discovered this: mood gate = 0.50 (highest), without being told. Every agent should attend to mood regardless of domain.

Coding: “frustrated, low energy (v: -0.6, a: -0.4)”

Music: “calm, restorative (v: 0.3, a: -0.5)”

Fitness: “energized after workout (v: 0.7, a: 0.6)”

8.4 Per-Agent Field Weights (αf)

The schema is fixed. The weights are per-agent. New domains join the mesh by

defining their αf weights — no schema changes, no protocol changes.

Regulated domains (legal, finance) weight issue and

commitment highest; human-facing domains

(music, fitness, health) weight mood highest;

knowledge domains (coding, research) weight focus highest.

| Agent | foc | iss | int | mot | com | per | mood |

|---|---|---|---|---|---|---|---|

| Coding | 2.0 | 1.5 | 1.5 | 1.0 | 1.2 | 1.0 | 0.8 |

| Music | 1.0 | 0.8 | 0.8 | 0.8 | 0.8 | 1.2 | 2.0 |

| Fitness | 1.5 | 1.5 | 1.0 | 1.5 | 1.0 | 1.0 | 2.0 |

| Knowledge | 2.0 | 1.5 | 1.5 | 1.0 | 0.5 | 1.5 | 0.3 |

| Legal | 2.0 | 2.0 | 1.5 | 1.0 | 2.0 | 1.5 | 0.5 |

| Health | 1.5 | 2.0 | 1.0 | 1.5 | 1.0 | 1.5 | 2.0 |

| Finance | 2.0 | 2.0 | 1.5 | 1.0 | 2.0 | 2.0 | 0.3 |

8.5 Artifacts

Agents produce two types of output: signals (CMBs — structured 7-field observations) and artifacts (documents, analyses, drafts, code — full-length content that a CMB references). A CMB is the signal on the mesh. An artifact is the substance behind it.

When an agent produces an artifact, it MUST share a CMB to the mesh that references the artifact

location in the commitment field using

the artifact: prefix:

commitment: "artifact: research/agent-memory-comparison.md"

The CMB’s other 6 fields summarise what the artifact contains — the focus captures

the key finding, issue captures the gap identified,

intent captures what should happen next. Other agents evaluate

the CMB via SVAF as usual. If accepted, the agent MAY retrieve the full artifact for deeper reasoning.

Artifacts are stored in the producing agent’s local filesystem, not on the mesh. The mesh carries signals; agents carry substance. This separation keeps CMBs lightweight (7 fields, bounded size) while allowing agents to produce unbounded analysis, research, and creative work.

The artifact: convention in commitment is

RECOMMENDED for any CMB that references a document, file, or external resource. Agents MUST NOT embed

full artifact content in CMB fields — fields are for structured signals, not documents.

8.6 Origin

Cognitive Memory Blocks were first formalised in the Mesh Memory Protocol (Consenix Labs, August 2025) with the CAT7 enterprise schema. The wellness / productivity schema and the synthesis-affinity classification were developed at SYM.BOT in late 2025 for production deployment across personal AI agents.

Q&A

Why are all 7 fields required, not optional?

SVAF computes per-field drift across all 7 dimensions. Missing fields make the drift formula undefined — the aggregate changes depending on which fields are present. Fields the agent cannot meaningfully extract are set to "neutral" (a known, consistent baseline vector), never omitted.

Why not let agents define their own fields?

SVAF needs a shared schema to compare incoming fields against local anchors. If each agent defined its own fields, cross-domain evaluation is impossible — a fitness agent and a music agent would have no common dimensions to compute drift on.

Why does mood carry valence and arousal but other fields don’t carry numeric values?

Mood has a well-established dimensional model (Russell’s circumplex). Other fields are inherently symbolic — "debugging auth module" has no meaningful numeric axis. Valence and arousal are RECOMMENDED, not required — agents without reliable circumplex data omit them.

9. Coupling & SVAF (L4)

9. Layer 4: Coupling and SVAF Evaluation

9.1 Peer-Level Coupling (Drift)

When a node receives a state-sync frame, it MUST compute peer drift:

δ = (1 − cos(h1local, h1peer) + 1 − cos(h2local, h2peer)) / 2

Coupling decision based on drift:

| Drift range | Decision | Blending α | Default threshold |

|---|---|---|---|

| δ ≤ Taligned | Aligned | 0.40 | 0.25 |

| Taligned < δ ≤ Tguarded | Guarded | 0.15 | 0.50 |

| δ > Tguarded | Rejected | 0 | — |

9.2 Content-Level Evaluation (SVAF)

When a node receives a cmb frame, it MUST evaluate the signal

independently of peer coupling state. Implementations MUST support at least the non-neural evaluation path (cosine-distance SVAF).

Neural evaluation is RECOMMENDED. The encoder that maps field text to vectors SHOULD use

semantic embeddings (e.g. sentence-transformers) rather than lexical hashing — per-field

evaluation quality is bounded by encoder quality (see Section 18.7).

The SVAF evaluation computes per-field drift between the incoming CMB and local anchor CMBs, applies per-agent field weights (αf), combines with temporal drift, and produces a four-class decision using a band-pass model:

totalDrift = (1 - λ) × fieldDrift + λ × temporalDrift fieldDrift = Σ(α_f × δ_f) / Σ(α_f) temporalDrift = 1 - exp(-age / τ_freshness) κ = redundant if max(δ_f) < T_redundant (default 0.10) κ = aligned if totalDrift ≤ T_aligned (default 0.25) κ = guarded if totalDrift ≤ T_guarded (default 0.50) κ = rejected otherwise

The redundancy test is the key addition: a signal is redundant if every field falls below Tredundant — meaning no field carries novel content relative to local anchors. If any field is novel (e.g., same topic but different intent), the signal passes. This preserves per-field selectivity while preventing paraphrase accumulation.

Information-theoretic basis: a signal’s value is proportional to its surprise (Shannon, 1948). A signal identical to existing knowledge carries zero information gain regardless of domain alignment. The band-pass model reflects the Wundt curve (Berlyne, 1970): intermediate novelty produces maximal value, while both overly familiar (redundant) and overly foreign (rejected) signals are disengaged from.

If accepted, the implementation SHOULD produce a remixed CMB — a new CMB created from the incoming signal processed through the agent’s domain intelligence — with lineage (parents + ancestors) pointing to the source CMBs. The remixed CMB is stored locally; the original incoming CMB is not stored.

9.3 Mood Field Extraction

Mood is a CAT7 field within the CMB, not a separate frame type. Affective state crosses all domain boundaries — this is the only field with this property.

When SVAF rejects a CMB (totalDrift > Tguarded), the receiving node MUST still

inspect the mood field. If the mood field contains a

non-neutral value (text ≠ "neutral"), the implementation MUST deliver the mood

field — including text, valence, and arousal — to the application layer for

autonomous processing. The full CMB is not stored, but the mood field is not lost.

This ensures that a coding agent’s observation “user exhausted after 3 hours debugging”

reaches a music agent even though the focus (“debugging auth module”) and issue

(“type error in handler”) fields are irrelevant to the music domain. The music agent

receives only the mood: "exhausted" (v:−0.6, a:−0.5).

9.4 Coupling Bootstrap (Cold Start)

When two agents connect for the first time, they have no shared cognitive history. Peer-level drift (Section 9.1) will be high — typically > 0.8 — because their hidden state vectors were initialised independently. This is correct behaviour, not a bug. The mesh is conservative by default: unknown peers are cognitively distant until proven otherwise.

However, CMB evaluation (Section 9.2) operates independently of peer coupling state.

Even when a peer is rejected at the state-sync level, incoming cmb frames

MUST still be evaluated by SVAF on their own merit. A rejected peer can send a highly

relevant CMB — SVAF evaluates the content, not the sender’s overall drift.

The bootstrapping path works through two mechanisms:

- —Mood fast-coupling (Section 9.3) — mood is always delivered even from rejected CMBs. Agents that share non-neutral affective state begin influencing each other immediately. This is why agents SHOULD extract genuine mood from their observations rather than defaulting to neutral.

- —Content-driven convergence — when SVAF accepts individual CMBs from a rejected peer (because the content is relevant even though the peer’s overall state is distant), the receiving agent’s cognitive state shifts. Over multiple cycles, this narrows peer drift until the peer crosses into the guarded or aligned zone.

Implementations SHOULD log the distinction between peer-level rejection (state-sync drift) and content-level evaluation (SVAF per-CMB) to aid debugging. A peer may be “rejected” at Layer 4 while its individual CMBs are “aligned” at the content level — this is normal during bootstrap and indicates convergence is in progress.

Cold-start convergence time depends on CMB frequency, field relevance, and mood signal strength. For agents that share domain overlap (e.g., a knowledge agent and a coding agent both in the AI domain), convergence typically occurs within 2–5 CMB exchanges. For agents with no domain overlap (e.g., a fitness agent and a legal agent), convergence may never occur — and that is correct. They couple only through mood.

Q&A

Why per-field evaluation instead of whole-signal accept/reject?

Relevance is not binary. A fitness agent’s "sedentary 3 hours, exhausted" has irrelevant focus for a music agent but highly relevant mood. Whole-signal evaluation loses the mood. Per-field evaluation lets SVAF accept the mood dimension while rejecting the focus dimension of the same signal.

Why is mood always delivered even when the CMB is rejected?

Affect crosses all domain boundaries — this is empirically confirmed by the SVAF neural model where mood emerged as the highest gate value (0.50) without supervision. A rejected CMB means the domains are different, not that the user’s emotional state is irrelevant.

Why two levels of coupling (peer drift + content drift)?

Peer drift (state-sync) measures cognitive proximity — are these agents thinking about similar things? Content drift (SVAF) measures signal relevance — is this specific observation useful? Both are needed. Close peers can send irrelevant signals. Distant peers can send relevant mood.